Promoting collaboration across the theoretical sciences.

Promoting collaboration across the theoretical sciences.

Initiative for the Theoretical Sciences

Please use this ZOOM LINK for the Nobel symposium on TODAY (Friday March 28)

https://us02web.zoom.us/j/81032314051?pwd=Qj7jUVbjAIIZKXICTVqP3wwf9SLp3i.1

Recent events

Video highlights

Spring 2024 Physics of Life: Students and Postdocs Edition

Single Molecules in Vivo

Fall 2023 Physics of Life: Students and Postdocs Edition

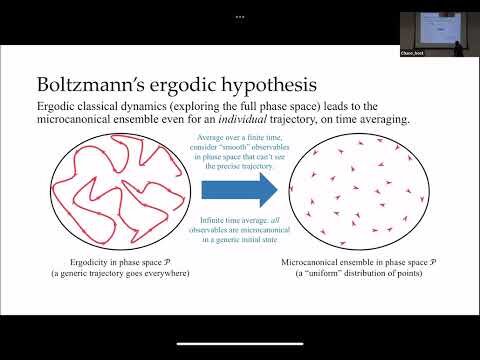

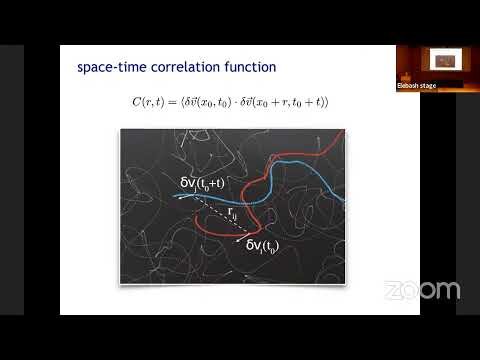

New Vistas in Chaos

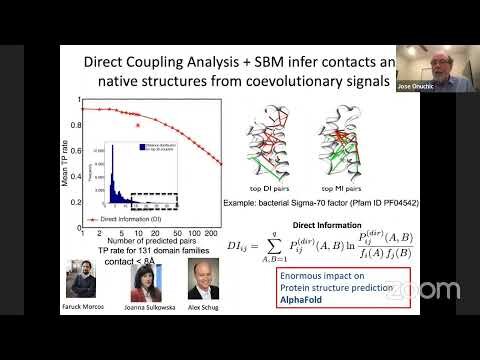

Theoretical Perspectives Enhancing Deep Learning Models

Information flow in bacterial chemotaxis

Neuroscience tutorial with Orie Shafer and Abhilash Lakshman: A Two Process Model of Fly Sleep

More and more neurons

Spring 2023 Physics of Life: Students and Postdocs Edition

Tutorial on Tensor Networks and Quantum Computing with Miles Stoudenmire.

Fall 2022 Physics of Life: Students and Postdocs Edition

Physics of Behavior

Day 3 PM - Biophysics: Searching for Principles

Day 3 AM - Biophysics: Searching for Principles

Day 2 - Biophysics: Searching for Principles

On characterizing classical and quantum entropy - Arthur Parzygnat (Categorical Semantics of Entropy)

Higher entropy - Tom Mainiero (Categorical Semantics of Entropy)

Polynomial functors and Shannon entropy - David Spivak (Categorial Semantics of Entropy)

Operadic composition of thermodynamical systems - Owen Lynch (Categorical Semantics of Entropy)

Tutorial on Categorical Semantics of Entropy - John Baez and Tai-Danae Bradley

Quantum Master Equations for Open System Quantum Dynamics III

Breaking integrability in classical kink collisions - Patrick Dorey (Integrability and Integrability Breaking)

Generalized hydrodynamics and BBGKY hierarchy - Bruno Bertini (Integrability and Integrability Breaking)

Duality in the Tricritical Ising Model - Giuseppe Mussardo (Integrability and Integrability Breaking)

Quantum Master Equations for Open System Quantum Dynamics II: Consistent theories beyond phenomenological or perturbation methods

Quantum Master Equations for Open System Quantum Dynamics I: Polaron-based and other methods for intermediate/strong coupling regime

Entropy production, thermodynamic uncertainty, and information

Dimensionality and dynamics in networks of neurons

Quantum Information in Chemistry

Tutorial on Quantum Information in Chemistry